Automatic model switching

Automatic model switching in AI can boost efficiency by selecting the most appropriate model for each query, ensuring a balance between quick and accurate responses.

I like knowing why my smart assistant sometimes responds quickly and other times takes a bit longer. It makes me trust it more when I see it's using the right tools for the job.

- Faster Response Times: Smaller models can deliver quicker responses for simpler queries, enhancing user experience by reducing wait times.

- Different Styles: Different models may produce responses in various styles or levels of detail, helping to match user expectations more closely.

- Transparency Builds Trust: Transparently indicating which model is being used for different queries helps set user expectations and builds trust in the AI’s operations.

More of the Witlist

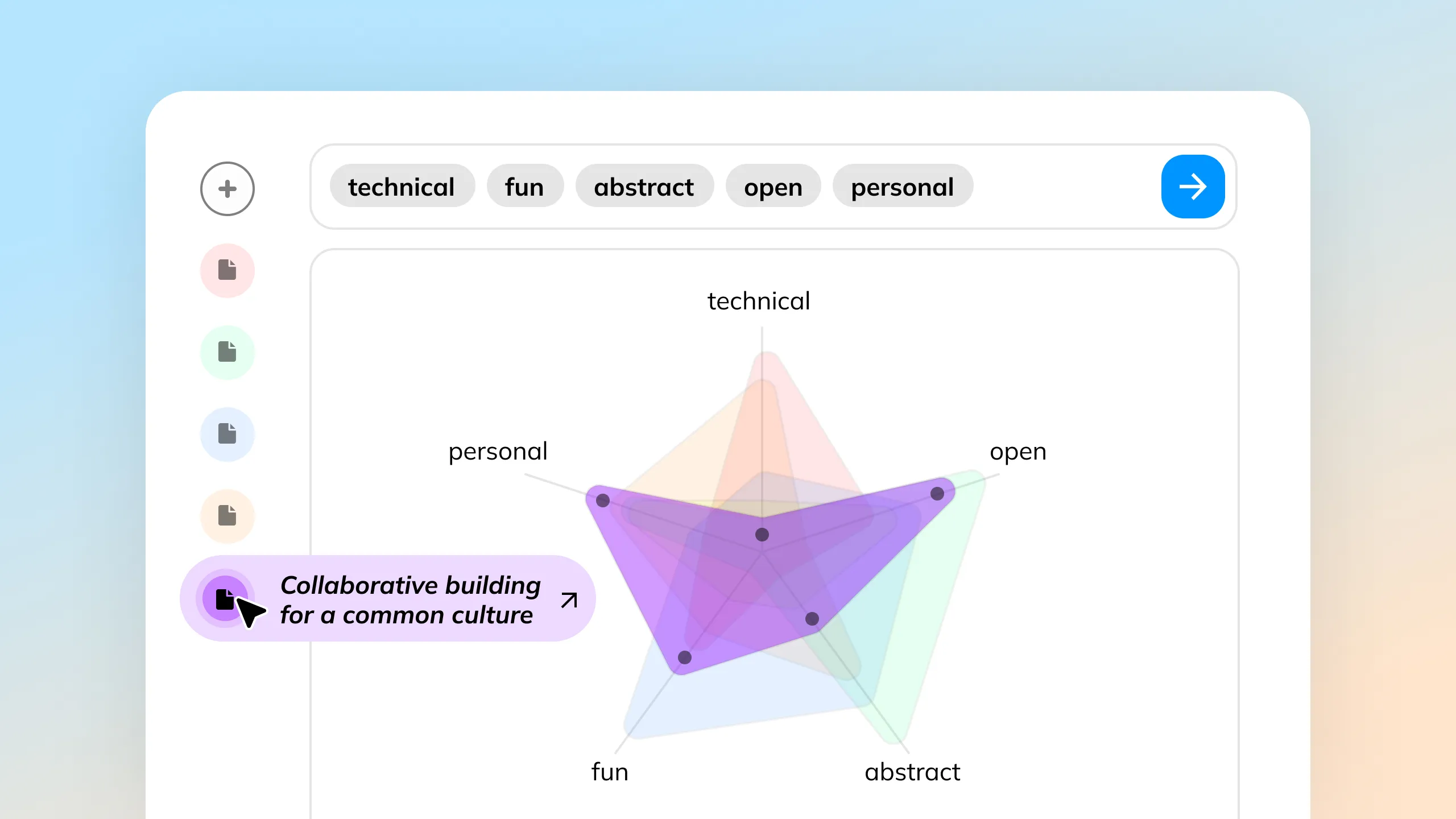

Generating multiple outputs and iteratively using selected ones as new inputs helps people uncover ideas and solutions, even without clear direction.

Referencing nested data from your database in the form of tags can simplify the creation of elaborate prompt formulas.

AI can enhance live chat streams by analyzing real-time data, identifying trends, and driving interactive elements like voting to boost audience engagement.

AI collaboration agents can act as writing partners that assist people by enhancing their content through transparent, easily understandable suggestions, while respecting the original input.

Comprehend and compare large documents by visualizing embeddings and their scores, enabling a clear and concise understanding of vast data sources in a single, intuitive visualization.

Letting people select text to ask follow-up questions provides immediate, context-specific information, enhancing AI interaction and exploration.